|

3/21/2023 0 Comments Label encoding in python

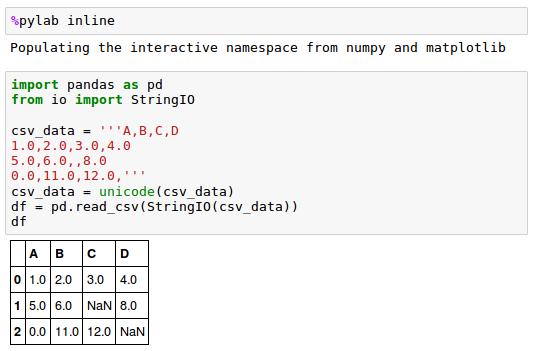

#, dtype=object), array(, dtype=object)]Įnc.transform(, ]).toarray()Įnc.inverse_transform(, ])Įnc. X =, , ]Įnc = OneHotEncoder(handle_unknown='ignore')

Here is the python snippet of one-hot encoding implementation: from sklearn.preprocessing import OneHotEncoder In other words, if you use label encoding, chances are that your model misunderstands your input as the pure value, and the explainability of your model is backfired: in this dimension, the model considers the values which have a larger ordinals are weighted more.īut the shortage of one-hot encoding is obvious: it requires more RAMs than the original set, especially there are tons of unique values. This is because your model is not as smart as us humans that they can recognize your input as orders or values on its own. Here is my reason: one-hot encoding eliminate the ordinality of the column data and it is helpful to your modeling. A high value label is considered to have higher priority than a lower value label. This can lead to the formation of a priority problem when training datasets. I am not sure what you are going to study but one-hot encoding is mostly preferred over labeling. Label constraint Encoding An encoding label converts data into machine-readable form, but assigns a unique number (starting at 0) to each data class.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed